Summary

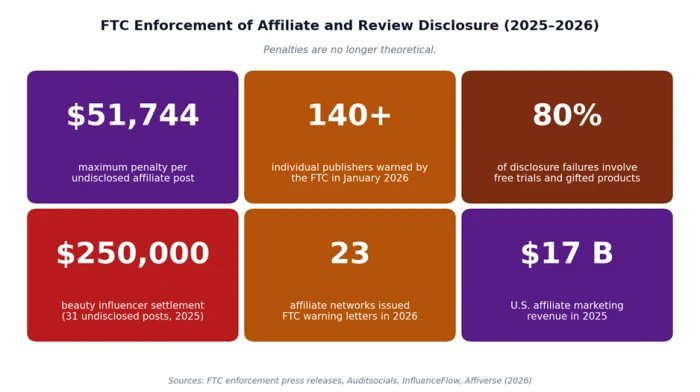

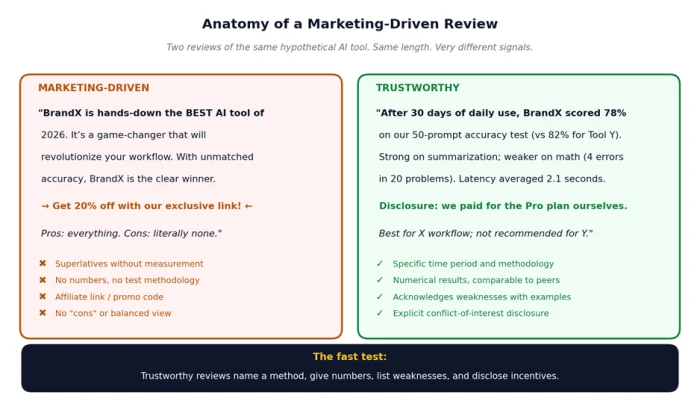

Many "AI tool reviews" published online are not reviews - they are marketing assets. In 2026 the FTC issued warning letters to 140+ publishers and 23 affiliate networks for failing to disclose paid relationships, with civil penalties of up to $51,744 per violation. Google’s January 2026 search update cut traffic to self-promotional listicles by an estimated 55%, and ChatGPT now cites those listicles 30% less than it did three months earlier. The fastest way to tell a real review from a marketing-driven one is to look for four signals: a stated method, numerical results, named weaknesses, and explicit disclosures.

Why AI Tool Reviews Often Mislead

AI is one of the fastest-growing software categories in history, and where money flows, marketing follows. The U.S. affiliate marketing industry generated $17 billion in revenue in 2025, and a substantial share of that revenue is tied to "best of" content, comparison articles, and tool reviews aimed at exactly the queries you are likely typing into Google or ChatGPT right now.

The problem is structural. Reviewers earn money when readers click through and convert, which creates a strong financial incentive to write positive reviews and bury negative ones. Independent industry research found that 80% of FTC disclosure failures involve undisclosed gifts and free trial products, and a 2026 academic analysis flagged that "best of" articles disproportionately rank the publisher’s own product first.

Search engines and AI models have started pushing back. Google’s January 2026 core update materially reduced traffic to sites that had built their growth on self-promotional listicles, and ChatGPT and Gemini both reduced citations of "best of" content over the same period.

Three Types of Marketing-Driven Reviews

Not all biased reviews are biased the same way. The funding model behind a review usually predicts the bias pattern. The four types below cover the vast majority of marketing-driven content you will encounter when researching an AI tool.

| Type | What it looks like | Who pays for it |

|---|---|---|

| Affiliate review | Independent-looking review with affiliate links to the recommended tool | Reviewer earns a commission on each signup or purchase |

| Sponsored review | Marked (or unmarked) "in partnership with..." review or video | Tool vendor pays a flat fee, free product, or both |

| Self-promotional listicle | "Best AI tools" article in which the publisher’s own product ranks #1 | No external payment - the publisher is the vendor |

| AI-generated SEO review | Templated prose with no first-hand testing or screenshots | Content farm or in-house team using LLMs at scale |

Table 1. The four common forms of marketing-driven AI tool reviews and who funds each.

These categories often overlap. A self-promotional listicle is usually also AI-generated and almost always lacks first-hand testing. The next three sections list the specific signals each category leaves behind, organized by where the signal appears - in the content itself, in the source publishing it, and in the structure of the review.

Red Flags in the Content

These are signals you can detect without leaving the review. They show up in word choice, level of detail, and balance.

Superlatives without measurement

Watch for "best," "ultimate," "game-changing," "revolutionary," "unmatched," and "hands-down." Real reviews tend to use comparative numbers ("scored 78% on our test, vs 82% for Tool Y") instead of unsupported superlatives. Repetitive marketing buzzwords across multiple reviews from the same site are a stronger signal than any single phrase.

No named methodology or test conditions

A trustworthy review specifies what was tested, for how long, on which version, and with what prompts or workload. A marketing-driven review skips straight to a verdict. If you cannot answer "how did they decide that?" after reading, the review has no method.

Generic praise that could fit any tool

Phrases like "intuitive interface" and "saves time" describe almost every modern SaaS product. Real reviews include details that only apply to this tool: a specific menu, a known bug, a feature that competitors don’t have. AI-generated reviews are particularly prone to this generic-praise pattern because language models default to safe, applicable phrasing.

No "cons" section, or trivial cons

Every real product has weaknesses. A review whose "cons" list is empty, or whose only complaint is something like "I wish there were more features" or "it could be slightly cheaper," is signalling avoidance of meaningful criticism. Look for cons that name a specific failure case the reader will encounter.

Quick test: Strip the product name out of the review. If the same words still describe ten other tools in the same category, the review has no informational content - only persuasion.

Red Flags in the Source

Some signals require you to look up who is publishing the review. These are usually faster to check than the content signals once you know what to look for.

The publisher sells the recommended tool

In 2026, security researcher Lily Ray documented approximately 30 sites running the same playbook: publishing 200+ "best of" listicles in which the publisher’s own product ranked #1 in nearly every list. If the company writing the review also makes one of the products on the list, the article is not a review - it is a sales page in disguise.

No named author, or AI-only author byline

A real review is signed by a real human with a verifiable history of work. "By the [publisher] team" or no byline at all is a weaker signal than a personal byline you can look up. Author profiles that list dozens of "expert" reviews across unrelated software categories within a few weeks usually indicate AI-assisted content production at scale.

Missing or buried disclosures

FTC guidance requires that any material connection - affiliate commissions, free products, sponsored relationships - be disclosed clearly and conspicuously, near the relevant content. Disclosures hidden in the footer, written in 8-point grey text, or worded vaguely ("we may earn a commission on some links") are warning signs even when they exist.

Domain pattern signals

Sites operating on exact-match domains for the category they review ("bestaitools.com," "topcrm.io"), sites that have published more than 50 "best of" articles in a year, and sites whose entire content library is product comparisons all warrant additional scrutiny. None of these is automatically disqualifying, but combined with content red flags they raise the probability that the review is marketing-driven.

Red Flags in the Structure

These signals are about how the article is built rather than what it says - patterns visible in the table of contents, the order of mentions, and the formatting of the call-to-action.

The recommended tool is conveniently #1

In legitimate ranked lists, the order of items is justified with reasoning. In self-promotional listicles, the publisher’s product is at the top with a one-line justification, and competitors are listed in declining detail. If you cannot tell why item #1 beats item #2, the ranking has no analytical foundation.

Affiliate links concentrated in the conclusion

Marketing-driven reviews almost always end with a "ready to try [Tool]?" call-to-action with a tracked affiliate link. The link is the entire reason the review exists. Trustworthy reviews link to the vendor’s home page, not a parameterised affiliate URL.

Identical structure across many reviews from one site

When every review on a site uses the same H2 headings ("Pros," "Cons," "Pricing," "Final Verdict") in the same order, with the same word counts, the content is being generated from a template. This is fine when the template is good and the testing is real. It is a warning sign when combined with generic praise and no methodology.

Stock imagery only

A real review of an AI tool includes screenshots of the interface, a video walkthrough, or original test artifacts. A review that uses only stock photos of "people using laptops" almost certainly was not written by anyone who used the product.

The 13-Point Trust Scorecard

The scorecard below converts the signals above into a single number you can apply to any AI tool review in roughly two minutes. Award the listed points for each question that the review clearly satisfies. Maximum possible score is 13.

| # | Scoring question | Score |

|---|---|---|

| 1 | The reviewer states a specific test methodology (time period, prompts used, hardware, version). | +2 pts |

| 2 | The review contains numerical results (accuracy %, latency, token costs, error counts). | +2 pts |

| 3 | At least one weakness, limitation, or failed test case is named. | +1 pts |

| 4 | The reviewer compares the tool to one or more direct competitors with results. | +1 pts |

| 5 | Any affiliate link, free product, or paid relationship is disclosed in or near the review. | +2 pts |

| 6 | The reviewer is a real, identifiable person or established outlet (not anonymous, not a bot). | +1 pts |

| 7 | The publication is not the same company as the tool being reviewed. | +1 pts |

| 8 | There are screenshots, videos, or original test artifacts - not just stock images. | +1 pts |

| 9 | The review has been updated within the last 6 months for the current tool version. | +1 pts |

| 10 | The conclusion includes a "not recommended for..." or "skip if you need..." section. | +1 pts |

Table 2. The Trust Scorecard. Award the points listed when the review clearly meets the criterion.

Interpreting your score

| Score | What it means |

|---|---|

| 11–13 | Trustworthy. Use this review with normal critical reading. |

| 7–10 | Mixed. Useful but supplement with at least one other source. |

| 4–6 | Weak. Treat the review as a vendor pitch with extra information. |

| 0–3 | Marketing-driven. Do not rely on this review at all. |

Table 3. How to read the scorecard total.

Practical use: Run two or three reviews of the same tool through the scorecard before making any purchase decision over $20/month. The exercise takes five minutes and almost always changes which review you trust most.

Where to Find Trustworthy Reviews

Marketing-driven reviews are easier to find than honest ones because they are designed for search visibility. Honest reviews are usually further down the results page. The categories of source below tend to score higher on the trust scorecard than the average affiliate blog.

• Independent benchmarks and leaderboards. Sources like LMSYS Chatbot Arena, SWE-bench, and academic papers report measured numbers without commercial incentives.

• User-generated platforms. Reddit subreddits for specific tools, GitHub issues, and Hacker News discussions surface specific bugs and limitations marketing reviews omit. Treat them as anecdotal but useful.

• Long-form video reviews from established creators. Channels with multi-year track records of criticising products are more accountable than text blogs because their bias is visible across many videos.

• Trade publications and analyst firms. Publications like Ars Technica, The Verge, IEEE Spectrum, and analyst reports from firms such as Gartner and Forrester have editorial separation between content and commerce, even when imperfect.

• Customer review platforms with verification. G2, TrustRadius, and Capterra require buyer verification, which raises the cost of fake reviews - though paid-review schemes still exist there too.

For the most accurate picture, triangulate: read at least one independent benchmark, one verified user review, and one in-depth long-form review. Discrepancies between sources are themselves a signal.

Conclusion

The fastest, most reliable filter for AI tool reviews in 2026 is structural, not stylistic. Trustworthy reviews share four traits: a stated method, numerical results, named weaknesses, and explicit disclosures. Marketing-driven reviews almost always lack all four. Once you know what to look for, the difference takes seconds to spot.

Run any review you rely on through the 13-point scorecard before making a paid purchase. Cross-check at least one independent benchmark and one verified user review. Treat self-promotional listicles, especially "best of" articles in which the publisher ranks itself first, as marketing copy until proven otherwise.

Comments

Join the discussion and share your perspective.